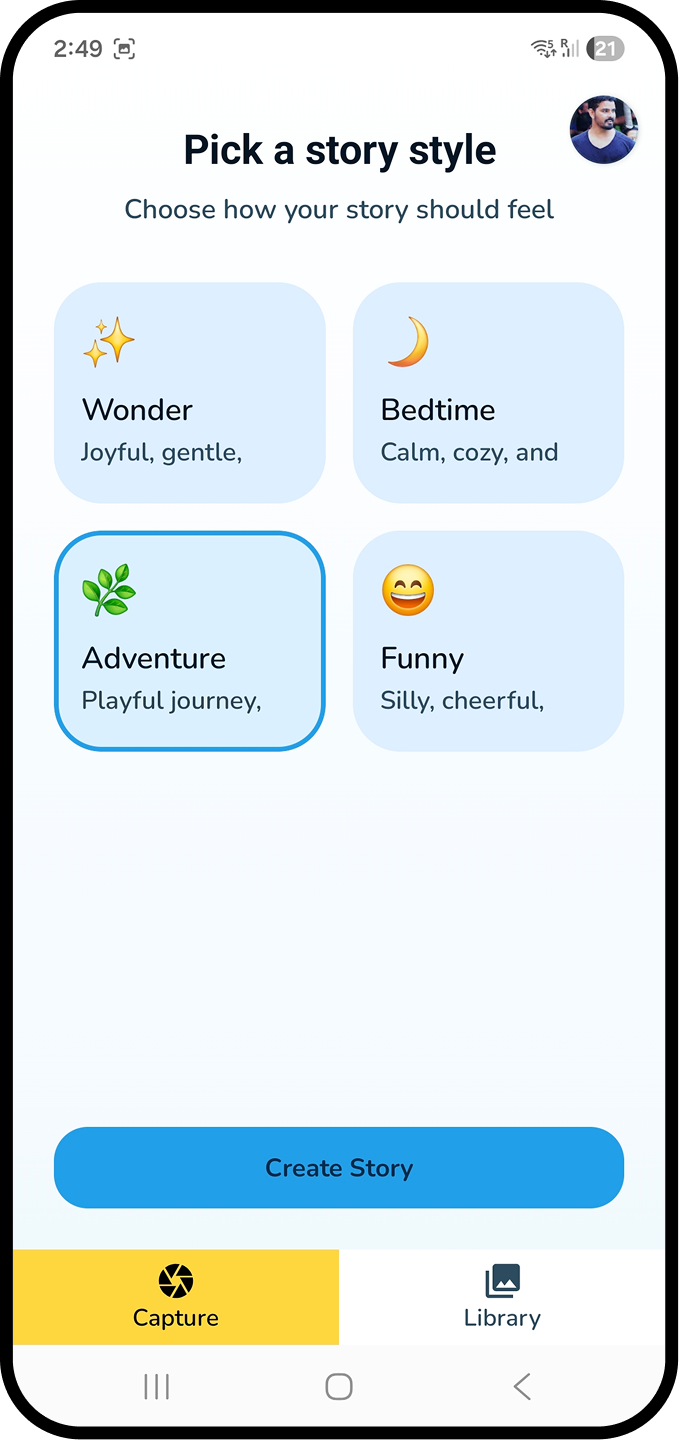

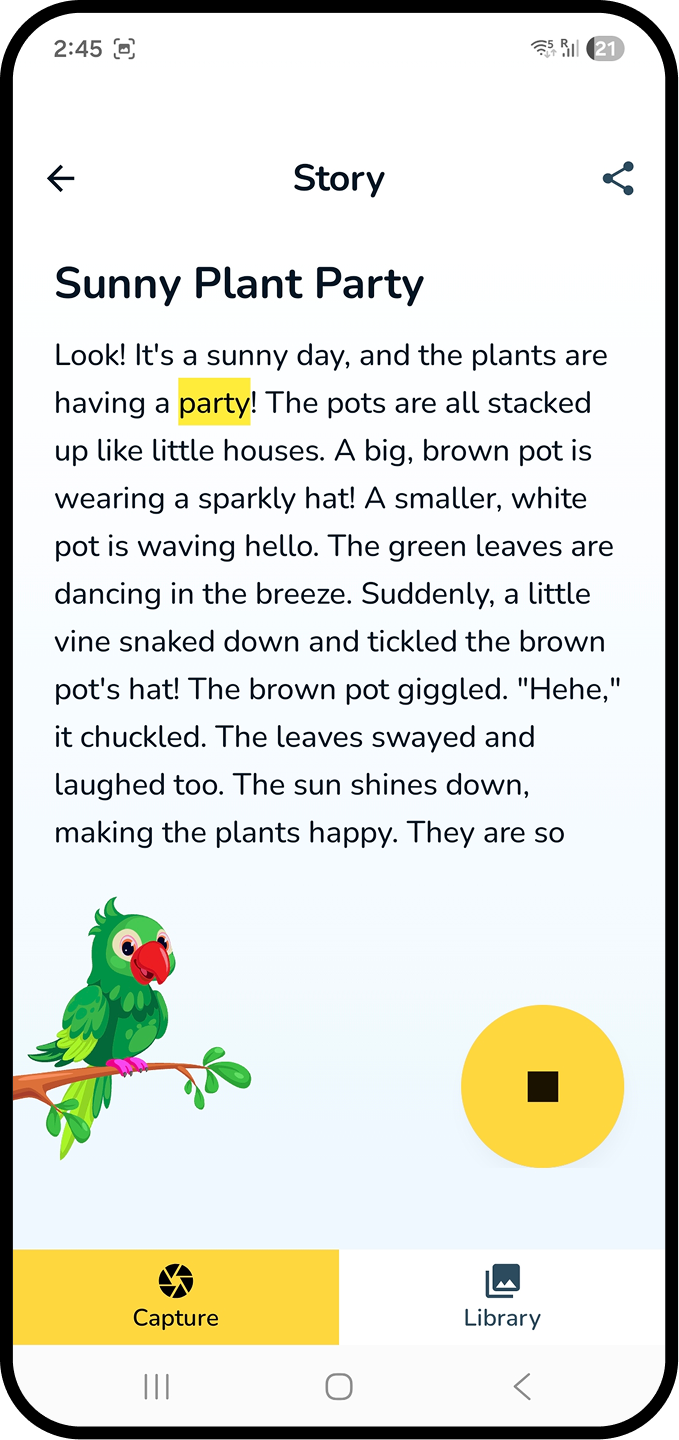

Karaoke words

meet a human voice.

The story screen serves emerging readers first — TTS narration with word-level highlighting, pause / replay per sentence, and a reading-pace slider for parents.

- Typography sized for ages 5–10 (18pt body, 1.5 line-height).

- Highlight colour tested against dyslexia-friendly contrast ratios.

- Optional audio replay became the most-used feature in the first days.